Debunking Common ‘Human Slop’ Objections to Generative AI

Generative AI has sparked a familiar chorus of complaints: it “steals” from human artists, lacks intention, dilutes skill, floods the market, or produces derivative work. These are the tropes of reflexive panic, recycled from debates as old as photography, player pianos, and early mechanized composition. A careful look at history and artistic practice exposes the weaknesses of these claims.

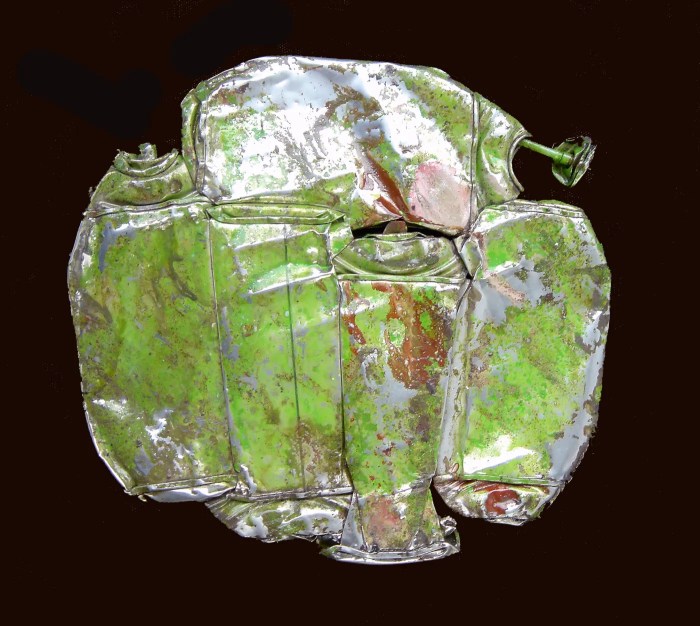

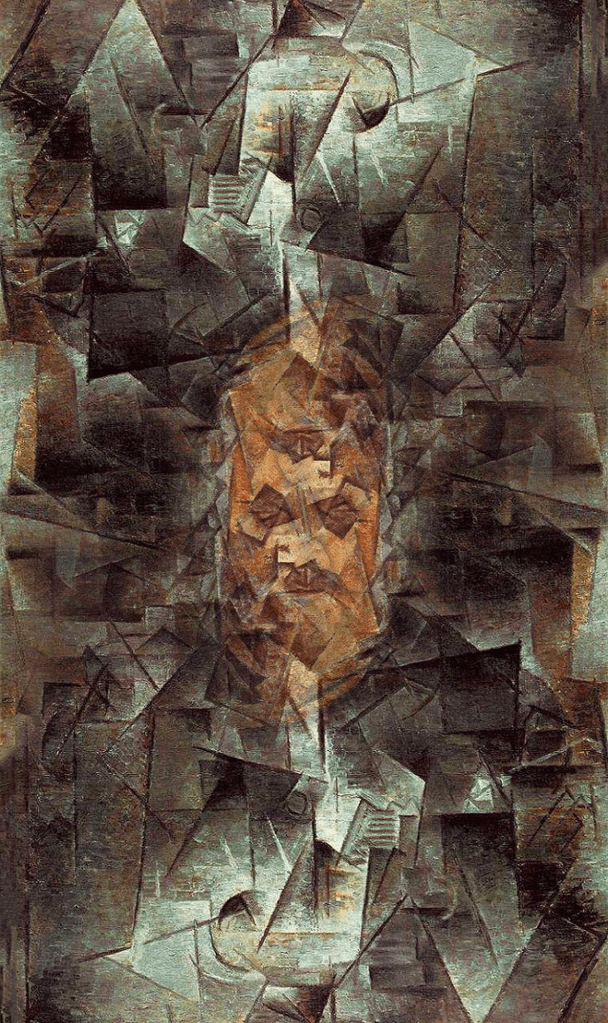

One recurring argument is that AI outputs are inherently “derivative” or “copied.” Critics insist that if a machine reproduces recognizable patterns, it is guilty of theft. Yet human artists have always worked this way: they study, memorize, abstract, and recombine the work of predecessors. Juan Gris did not “steal” from Picasso and Braque; he absorbed their Cubist vocabulary and transformed it into a distinctive artistic voice. The same logic applies to AI: identifying familiar patterns does not constitute theft any more than recognizing Gris’s style would.

The claim that AI “lacks intention” is similarly overstated. Critics assume that the absence of human consciousness or subjective emotion in the generative process makes the output inauthentic. Yet photography, tape-loop music, and computer-assisted composition have long challenged this assumption. Human creativity often operates through delegation to instruments, systems, or processes: composers use synthesizers, writers use typewriters, musicians follow scores, and painters employ cameras as aides. Generative systems extend this principle—they are tools guided by human intention, not replacements for it. Brian Eno’s generative music, for example, demonstrates that systems can produce rich, compelling work while remaining fully artistic. His use of rule-based processes provokes admiration rather than panic, showing that machine-assisted creation is neither illegitimate nor intrinsically soulless.

A common anxiety is that AI devalues skill or bypasses years of training. Early critics of photography leveled the same claim: why invest in realism when a camera can reproduce it perfectly in seconds? Yet skill did not disappear. Painters explored abstraction and expression, photographers developed new artistic vocabularies, and craft evolved. AI magnifies this pattern: technical proficiency remains relevant, but the forms and tools of practice shift. Mastery is no longer limited to replication; it now includes curation, prompting, and integration of generative outputs.

Concerns about scale, speed, and volume are equally familiar. Critics point to the rapid proliferation of AI-generated images, videos, and music as evidence of threat. But the history of art and music is replete with similar moments: photography industrialized image-making, player pianos mechanized music, and recorded sound disseminated performances on an unprecedented scale. These developments provoked panic, yet they were eventually absorbed into creative practice. AI amplifies these dynamics quantitatively, not qualitatively: the principle—mechanization accelerates production—remains continuous.

The “data and consent” objection also collapses under historical scrutiny. Critics claim AI “steals” because it trains on existing works without explicit permission. But human artists never sought consent from every precedent they studied, memorized, or referenced. The camera never asked permission from the painter, yet photography became legitimate; the difference is in perception, not principle. Machine learning mirrors human learning: it absorbs patterns, abstracts them, and recombines them into new works. “Copying” is not theft; it is creation.

Finally, there is a structural hypocrisy in many contemporary critiques. Internet articles denouncing AI for its environmental cost, data use, or mechanization are themselves hosted and disseminated via energy-intensive data centers. Every click, post, and page load relies on the same digital infrastructure these oblivious, hypocritical critics decry. Online AI critiques are inseparable from the very systems that power AI.

The panic is moralistic, selective, and socially conditioned rather than logically or ethically consistent: it’s ‘human slop’.

Privilege also shapes reception. Brian Eno can deploy generative processes and receive critical acclaim; generative approaches from marginalized creators are more likely to be dismissed. Historically, innovations from African American musicians were often devalued until they were repackaged by white performers like Elvis Presley. The lesson is clear: objections to AI often intersect with existing social inequities in cultural gatekeeping. The technology itself is neutral; what matters is who wields it and how institutional and cultural power mediates its reception.

The recurring pattern is unmistakable: every complaint about AI—lack of originality, theft, mechanization, or devaluation of skill—has a precedent in artistic history. Photography, early electronic music, player pianos, sampling, collage, and generative compositional techniques all provoked the same anxieties, yet all were ultimately integrated into legitimate creative practice. AI is simply the latest instance of a familiar cultural tension: new tools provoke discomfort, but human ingenuity adapts, frames, and guides their use.

Criticism without historical perspective is a moral panic, not a reasoned argument.